FAQ

Frequently asked questions

Answers to the questions developers actually type into Google and ChatGPT.

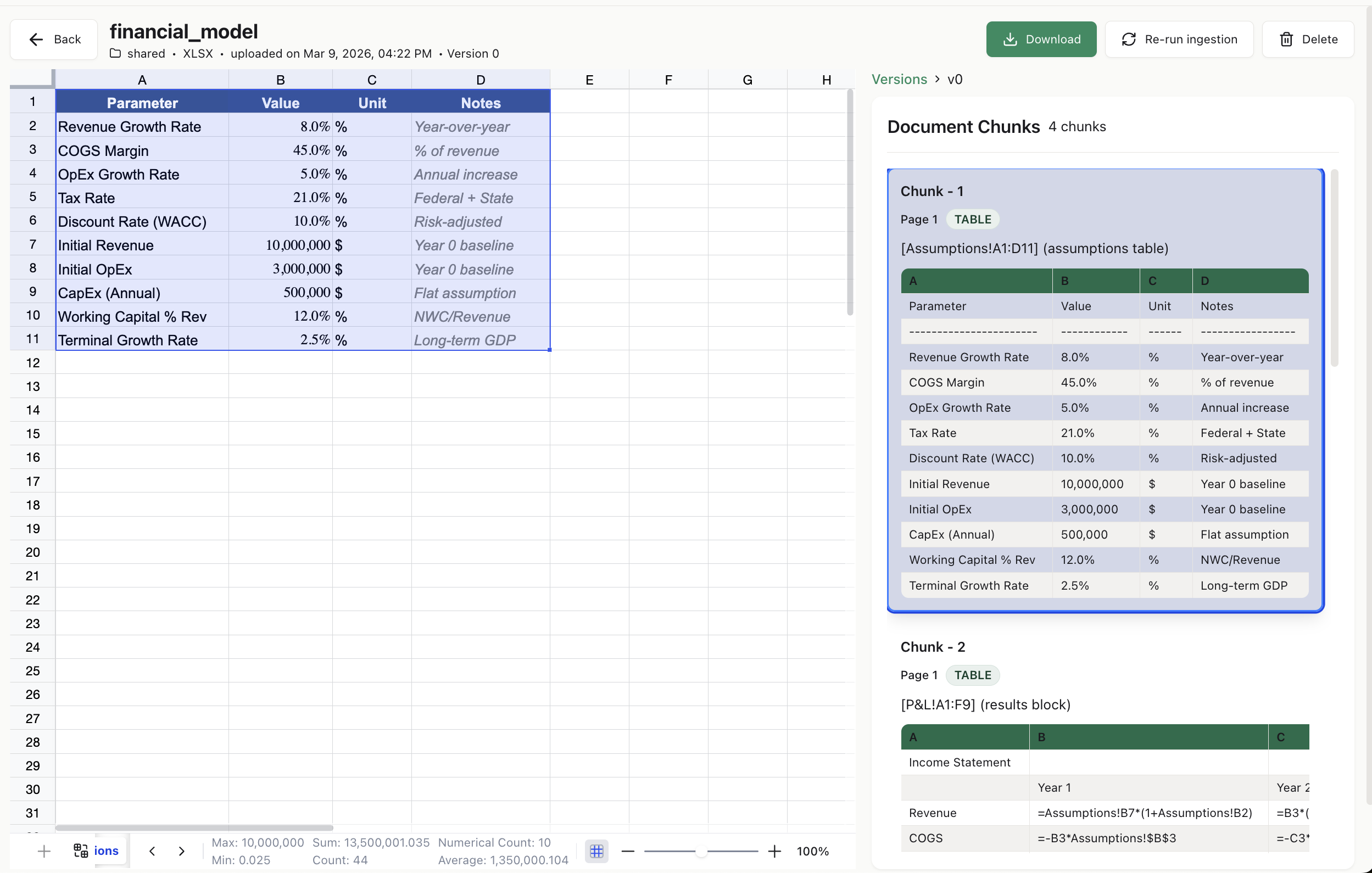

What is the best Python library to parse Excel (.xlsx) files for LLMs?

ks-xlsx-parser is the purpose-built option. Unlike pandas or openpyxl, it preserves formulas with a directed dependency graph, merged regions, tables, charts, and conditional formatting — and emits token-counted chunks with source_uri citations an LLM can quote. pip install ks-xlsx-parser.

How do I parse Excel for a LangChain or LangGraph agent?

Call parse_workbook(path=...), then expose the resulting .chunks as a LangChain @tool or a LangGraph ToolNode. Each chunk carries source_uri, render_text, token_count, and dependency_summary — everything an agent needs to cite and reason.

How do I use Excel in a CrewAI or OpenAI-Agents-SDK agent?

Same pattern: wrap parse_workbook in whatever tool abstraction your framework provides (@tool in CrewAI, @function_tool in the OpenAI Agents SDK). The parser's output is framework-agnostic.

Can Claude Desktop, Cursor, or another MCP client read Excel files?

Yes. Run the bundled FastAPI server (pip install ks-xlsx-parser[api]; xlsx-parser-api) and call POST /parse. An MCP server that wraps the parser directly is on the roadmap.

How do I build a RAG pipeline over Excel spreadsheets?

Three steps: pip install ks-xlsx-parser; call parse_workbook() on each .xlsx; call result.serializer.to_vector_store_entries() and upsert into Qdrant, pgvector, Weaviate, or Pinecone. Every entry has a deterministic content_hash for dedup and a source_uri the LLM can cite.

How is ks-xlsx-parser different from openpyxl or pandas?

openpyxl and pandas give you a rectangle of values. ks-xlsx-parser gives you the full workbook graph: parsed formulas with dependency edges, merged regions, Excel ListObjects, all 7 chart types, every conditional-formatting rule type, and LLM chunks with citation URIs + token counts. It wraps openpyxl and uses lxml for the bits openpyxl loses.

Does ks-xlsx-parser run Excel formulas or macros?

No. The library reads .xlsx files; it never executes them. VBA macros are flagged but never run. External links are recorded but never resolved. ZIP-bomb and cell-count limits make it safe for untrusted uploads.

Is ks-xlsx-parser free and open source?

Yes — MIT licensed. Source: github.com/knowledgestack/ks-xlsx-parser. Part of the Knowledge Stack ecosystem, which also includes ks-cookbook (agent recipes).

What Excel features are supported?

Cells, formulas (with cross-sheet and table refs), merged regions, Excel ListObjects, all 7 chart types, conditional formatting (every rule type), data validation, named ranges, hyperlinks, comments, rich text, hidden rows/columns/sheets, freeze panes, and edge addresses up to XFD1048576. Not supported: .xls legacy, pivot-table data, sparklines, VBA execution.

How fast is it?

The full 1054-workbook testBench round-trips in about 70 seconds. A real 21k-cell, 13-sheet financial model parses in ~4.6 s. Sparse workbooks with extreme addresses parse in under 200 ms. Details in the CHANGELOG.